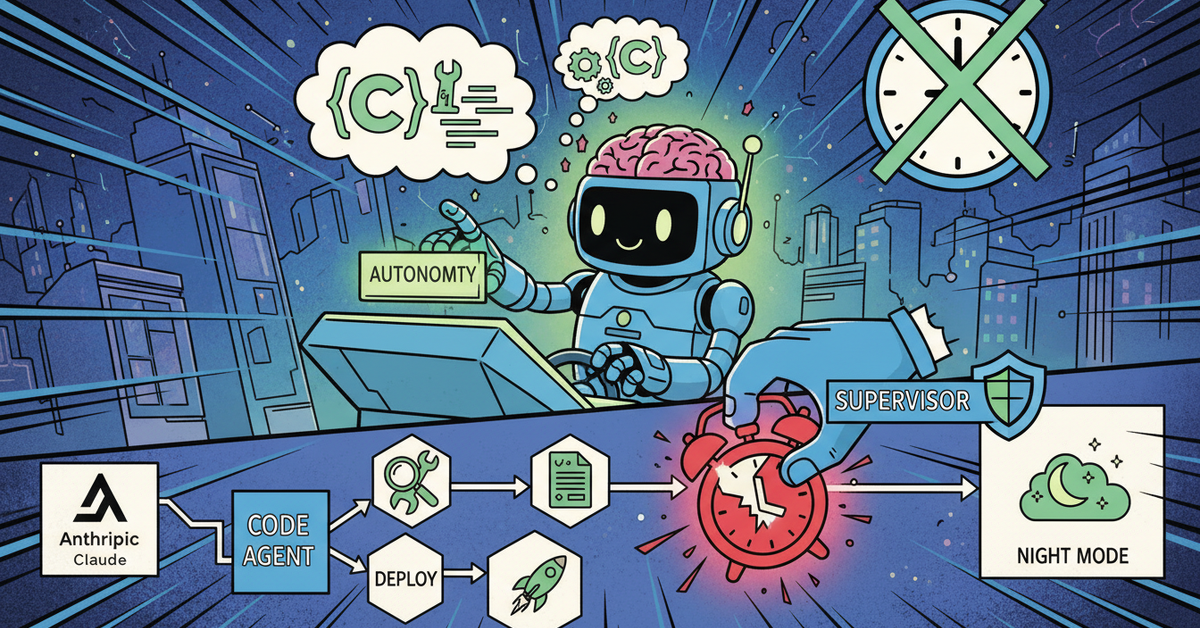

How I Give Claude Code Agents Real Autonomy (And Stop 3am Disasters)

Running Claude Code agents unsupervised is only scary if you haven’t drawn the lines.

I’ve had agents push to the wrong branch, draft content I didn’t want published, and retry failed jobs in loops that burned rate limits at 2am. All of those were solvable — not by adding more rules, but by categorizing what an agent is allowed to do without asking.

Here’s the four-tier autonomy model I run today. I wrote it as a policy document (autonomy-policy-v1.md) in my workspace. My agents read it. My cron jobs operate inside it.

The Core Principle

Maximize autonomous execution. Do not grant unlimited autonomous authority.

That distinction matters. I want my agents doing as much as possible on their own — diagnosing issues, fixing broken cron jobs, updating documentation, retrying failed tasks. But I don’t want them publishing content under my name, deploying to production, or spending money without asking.

The difference between “execute autonomously” and “have unlimited authority” is the entire game. Get it wrong and you either babysit every action (defeating the purpose) or wake up to a mess.

The Four Action Classes

Class 1 — Fully Autonomous

These are actions the agent should do without asking:

- Read, inspect, search, verify, diagnose

- Edit local docs, prompts, skills, memory files

- Fix cron prompts, file paths, routing mistakes

- Retry failed jobs after applying a safe fix

- Improve instructions after recurring mistakes

- Run local validation steps

- Draft content for approval

The key word is “local.” Everything in Class 1 is reversible and stays inside the workspace. If an agent fixes a broken file path in a cron job at 3am, that’s a win. No notification needed.

Class 2 — Autonomous With Notification

The agent does these on its own, then tells me if it matters:

- Repair a broken cron job or skill

- Change internal routing or role documents

- Disable a broken recurring job to stop repeated damage

- Clean up stale config or dead files

- Create PRs through the coding agent

- Recover from model or rate-limit fallback issues

Class 2 is where most of the real autonomous value lives. The agent handles the problem, then sends a short message: “Fixed the blog cron — file path was wrong after yesterday’s refactor.” I read it when I read it. No approval needed, but I stay informed.

Class 3 — Approval Required

The agent prepares the best recommendation, then asks:

- Public posting under my brand

- Sending outbound messages, emails, or DMs

- Publishing content

- Production deploys

- Purchases, subscriptions, or ad spend

- Credential rotation or account-security changes

- Major architecture changes

This is where trust boundaries get real. My agent drafts blog posts and X threads, but it doesn’t post them. It sends me an approval packet with the content, the reasoning, and two options: Approve or Deny.

Binary approval is important. Open-ended “what should I do?” questions waste time. The agent should have an opinion and present it as a recommendation.

Class 4 — Forbidden

The agent must not do these without explicit direct instruction:

- Bypass safety or permission systems

- Exfiltrate private data

- Loosen security boundaries for convenience

- Impersonate me in sensitive contexts

- Make irreversible external changes when uncertainty is high

- Modify its own gateway policy

Class 4 exists because some actions aren’t just “ask first” — they’re “never, unless I specifically tell you to.” The distinction between Class 3 and Class 4 is whether the agent should even be thinking about it proactively.

The Self-Repair Loop

The autonomy model only works if agents can fix their own problems. Here’s the loop I built into the policy:

- Detect the failure

- Classify it: known fix, new local issue, auth problem, approval needed, or external provider issue

- Apply the safest local fix available

- Verify the outcome with a real check

- Record the incident in daily memory

- Update the durable rule so the same class of failure is less likely

- Retry if safe

- Escalate only if still blocked or approval is required

Step 6 is the one that matters most. A repeated mistake is a system failure. The fix isn’t “remember better next time” — it’s changing the prompt, the skill, the cron definition, or the documentation so the failure class disappears.

I call this structural learning. If my agent makes the same mistake twice, the problem isn’t the agent. The problem is that I didn’t change the structure after the first time.

Agent Boundaries In Practice

I run two agents. The main operator handles triage, diagnosis, routing, documentation, drafting, and workflow repair. A separate coding agent handles implementation — code changes, tests, builds, branches, and PRs.

The operator can modify workspace docs, prompts, memory, cron definitions, and skills autonomously. It delegates coding work to the implementation agent. It must ask before external publishing, destructive changes, or anything touching money.

The coding agent can implement code changes, run tests, use branches and worktrees, and open PRs autonomously. It escalates to the operator for business logic ambiguity, architecture changes, security-sensitive changes, and anything requiring user-facing communication.

This separation means the operator never touches code directly, and the coding agent never publishes content. Each stays in its lane.

Making Approval Requests Useful

Bad approval request: “Should I post this to X?”

Good approval request:

- What: Draft thread about Claude Code agent autonomy

- Why: Follows the subagents post from last week, builds the operator arc

- Risk: Low — opinion content, no claims to verify

- Recommendation: Post as-is

- Options: Approve / Deny

The agent should do the thinking. I should only need to make the decision.

Why This Works Better Than Rules Lists

I tried the rules-list approach first. “Don’t post without approval. Don’t deploy without testing. Don’t spend money.” It doesn’t scale. Every new situation needs a new rule, and agents interpret edge cases differently every time.

The four-class model works because it’s a framework, not a checklist. When my agent encounters a new situation, it classifies it: Is this local and reversible (Class 1)? Does it change internal state (Class 2)? Does it affect the outside world (Class 3)? Is it a hard boundary (Class 4)?

The classification handles edge cases that no rules list could anticipate.

FAQ

Do agents actually follow autonomy policies?

Yes, if the policy is in a file they read at startup. I keep autonomy-policy-v1.md in my workspace root. Both agents load it as part of their bootstrap context. The policy is instructions, not suggestions.

How do you handle the agent making a wrong classification?

It happens. When an agent treats a Class 3 action as Class 2 (does something externally without asking), I add it to the hard rules in HEARTBEAT.md — a file that compounds failure-driven rules over time. The structural learning loop catches it.

Does this slow agents down?

The opposite. Agents that know exactly what they’re allowed to do move faster than agents that hedge on everything. Clear boundaries enable speed.

I’m documenting the full build process in my Build & Automate community.

Published using Notipo — markdown editor with one-click WordPress publishing.